Everybody Dumps Production At Least Once

Let me paint you a word picture.

You're a part-time university student, doing full-time work as a web admin for a small e-commerce company. Due to a website overhaul, you've just been tasked with updating the product catalog of said e-commerce company. You need to update the hundreds of products and move them to their new categories using the aging content management system. It's the exact sort of drone work that comes your way and takes a few hours to carry out.

But you don't do it manually. You're in your third year of university and you're brimming with all kinds of new and interesting knowledge. You copy-paste a mishmash of SQL that will carry out most of the updates in one go. You take it to a dev and ask him to run the SQL for you.

He does.

And in one fell swoop, hundreds of products end up under one parent category.

You are me. And I am fucked.

ChatGPT made me white and pudgier

ChatGPT made me white and pudgier To make a long story short, I had to spend the next few hours hurriedly updating everything and putting the products in the correct category. The only saving grace here was that due to the website overhaul, the navigation was hardcoded and my error wasn't visible until you started digging into the product categories.

This was the first time I screwed up and it had an impact on the company that I worked for. It wouldn't be the last. But, the only thing I care about is that I didn't do the same mistake twice. So far.

Expect Mistakes to Happen. Fix the Process. #

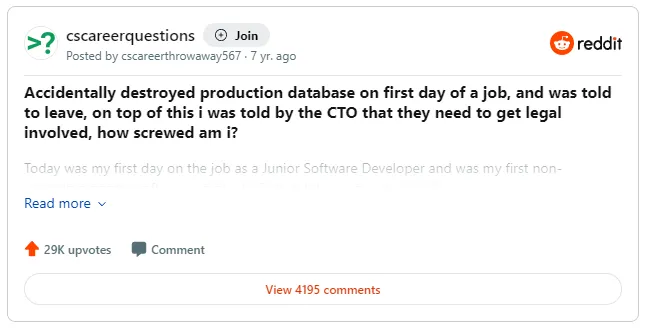

How screwed am I?

How screwed am I? This Reddit post recently resurfaced and started making the rounds on tech Twitter.

To recap, a junior developer accidentally wiped the production database while setting up their dev environment. The setup documentation the developer was given used the production credentials by default and the dev was supposed to overwrite this by generating their own.

For anyone who's been around a while, you can immediately see there's a process problem here. Among other things, there's no good reason for production credentials to be available in a setup document. And let's not even talk about the interaction with the CTO. Telling the new hire to "leave and never come back" after the company messed up isn't a good look.

This sort of error is all too common and to be expected when you're working with interconnected systems. Blaming the human behind the issue and ignoring the process failure that got you to this place will just ensure that this same mistake is repeated.

Going back to my earlier mistake, the following things come to mind

- Don't let the new guy write SQL that updates hundreds of items in the product catalog without peer review.

- Test code in a test environment.

- Don't run SQL on production just because the new guy asked you to.

- Keep regular backups of your data. Or at the least, backup the specific tables before you run the SQL.

Any one of these process changes would have resulted in a better outcome for everyone concerned.

Checkpoints along the way make things a little easier

Checkpoints along the way make things a little easier The Time I Botched Up a MySQL Migration #

I've never really dropped a production MySQL database (I did drop a marketing database accidentally, but that doesn't count!). But let me tell you about the time I screwed up a MySQL database migration.

I'd been working on a big update to our web application and we were getting ready to release. A part of the update was a large content migration that would generate a lot of new rows in the DB. This migration was estimated to take about 15 minutes on a production workload and we had scheduled an appropriate amount of downtime. I knew this because I'd already tested everything and confirmed it works with a production workload (process, hell yeah!).

On the day of the migration, I received a request to tweak the script with a small change. I did the update and ran it on the staging environment to check things out. It seemed fine so I checked in the update and prepared to run the final migration.

I put up the maintenance message and queue the update script. I monitor the row counts and the progress information, everything seems fine.

At first.

About 10 minutes in, I noticed that the script was running slower than I anticipated. I don't panic yet, I'd planned for 30 minutes of downtime so I was still within the parameters.

At 15 minutes in, it's clear something major is going on. The script that's generating the new rows is taking a lot longer to process. We were a small team so we just decided to power through rather than reverting the whole migration. All in all, what should have been a 15-minute job took over 3 hours to complete.

Slowest 15 minutes of my life

Slowest 15 minutes of my life The root cause of the issue was that the tweak in the query touched a table that didn't have an index for the data I was accessing. And since I only tested that particular change in a staging environment, I never realized it was going to choke the entire process when run against a much larger production workload.

The lesson here is you should always check and double-check everything after a change. No matter how trivial you think the change is. Because of this error, I always tend to question my assumptions whenever I run into a situation that seems impossible. If I'm seeing an impossible error, then it's clearly not impossible.

Everyone Fucks Up #

Keep on keeping on

Keep on keeping on Once you screw up, the best thing you can do is learn from it.

Some of my greatest hits:

- At my first job in sales, gave goods on credit to a customer with a bad credit record. Took me a year to recover the payment.

- Deleted a database with a bunch of leads and only a partial backup.

- Redeployed an old commit instead of a critical security fix and didn't realize till the next day (thankfully it was an unpublished URL and never got hit)

- Ran tests on local while connected to the test environment and wiped the DB

I learned valuable lessons from all of these mistakes. Each and every one of these resulted in some action on my side that reduced the chance of this problem from occurring again. Whether by my own hands or someone else.

As the person who committed the mistake, it can feel overwhelming to realize just how badly you fucked up. This is normal. Admit to yourself that you made a mistake and don't look to deflect. Take ownership of the fact and then assess if there's anything that can be done to mitigate the immediate fallout. If there's nothing to be done right now, take a moment and review the process by which you arrived at this end result. This will be useful in what comes next.

Hopefully, you're part of a company culture that doesn't jump to playing the blame game whenever something goes wrong. Dealing with the fallout of a mistake that has an organizational impact is its own topic.

Good luck with your next fuckup!